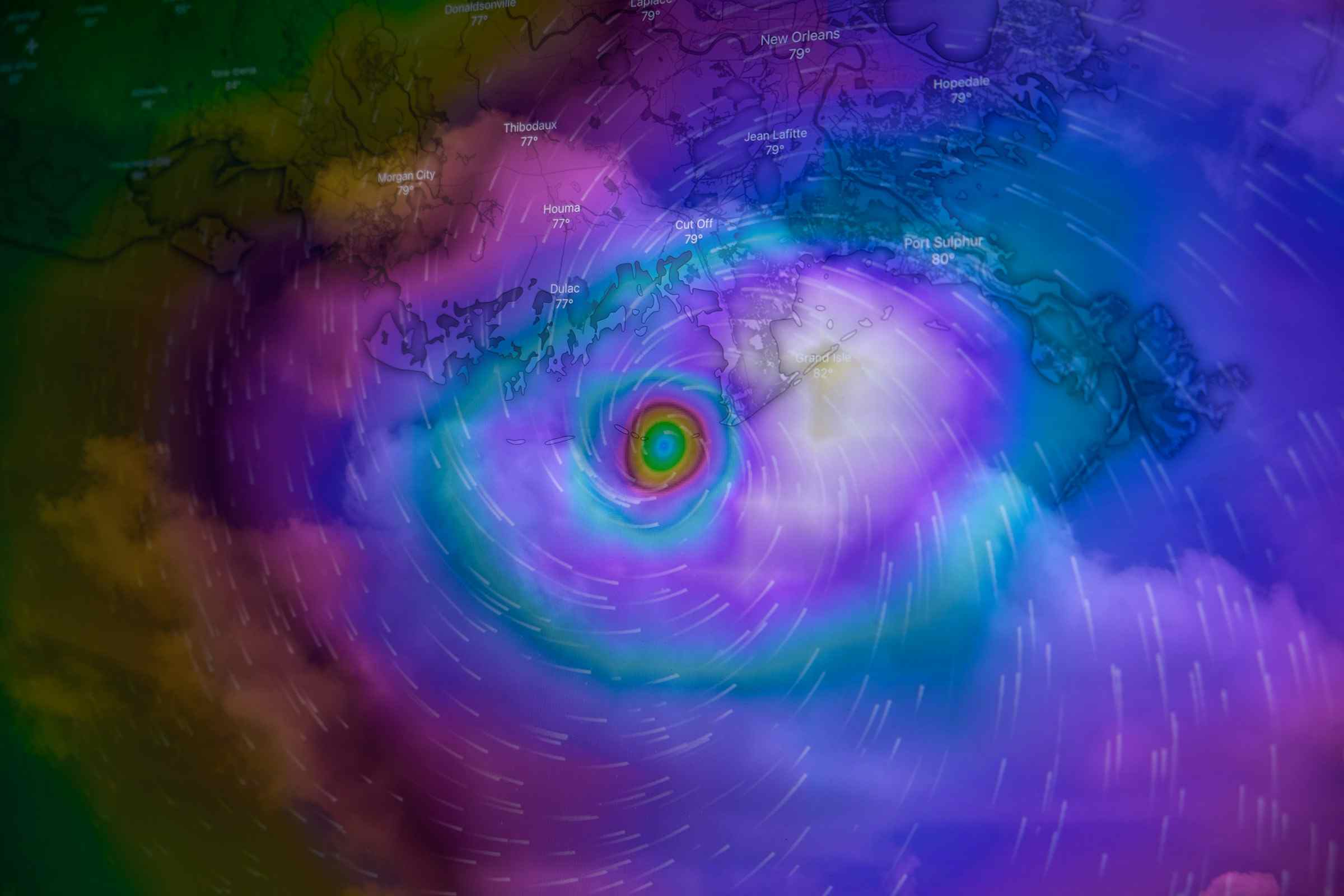

Why Real-Time Catastrophe Response Still Challenges Insurers

If you look strictly at capability, the industry should be operating in near real time during catastrophe events.

Carriers today can ingest live hazard data, overlay it with exposure, and identify impacted policyholders almost immediately. Event feeds from agencies such as the U.S. National Weather Service, combined with geospatial analytics and AI augmentation, allow near-instant visibility into where losses are likely to occur.

That’s a meaningful shift from even five years ago. But real-time visibility has not translated into real-time response in Cat Risks. Not at scale, and not consistently.

Decision Latency Is Now the Primary Constraint

Catastrophe risk intelligence is on point because we've got more data than ever. Oganizations like the National Oceanic and Atmospheric Administration (NOAA), through its National Centers for Environmental Information (NCEI), track everything from wind gusts and rainfall intensity to flood levels and storm paths with near real-time precision. Gallagher's big data reports highlight IoT revolutions in claims, and Aon's Event Analytics promises event-by-event loss forecasting. But here's the gut punch: that data doesn't flow into decisions.

We might have nailed data availability, but flunked the handoff to action. This isn't a problem with catastrophe risk intelligence but rather a workflow, siloing, and decision-engine failure that's costing us billions in delayed recoveries, regulatory fines, and lost policyholder trust. In 2025 alone, global insured cat losses hit $130B, yet only 54% of re/insurers used models to meaningfully guide some level of real-time responses.

The most underexamined friction point in catastrophe risk response is decision latency—the elapsed time between signal and execution.

This delay shows up in three places:

-

Validating incoming data across systems

-

Assigning ownership for action

-

moving decisions through approval structures

Individually, each step feels reasonable. Under catastrophe conditions, those delays compound quickly.

The result is a system where data moves in minutes, but decisions still move in hours, sometimes longer.

The reason for the disconnect is that no one's built the "decision bus" connecting it all

-

Siloed Sources Create Bottlenecks: Siloed sources create major bottlenecks. Exposure data sits in underwriting systems, claims imagery stays trapped in adjuster apps, and CAT intelligence lives in separate vendor platforms. During the 2025 Los Angeles wildfires, insured losses reached a record ~$40B, and nearly 48,000 claims were generated in Q1 alone. Underwriting teams, though, couldn’t dynamically reprice portfolios mid-event because wildfire and geospatial hazard feeds weren’t connected to exposure engines.

-

Volume Crushes Velocity: 2025's $130B loss year saw secondary perils (floods post-wind, fires post-freeze) claim 70% of payouts, according to cat modeling deep dives. Models predict these in theory, but without automated cascades to claims workflows, adjusters drown. Hurricane claims routinely blow past 30-day state mandates, hitting 45-60 days in Florida and Texas.

This is consistent with post-event observations from U.S. regulators. Following large-scale events like Hurricane Milton. In 2024, filings reviewed by the National Association of Insurance Commissioners showed that claim settlement timelines extended significantly despite the rapid availability of catastrophic risk intelligence. The bottleneck was not awareness; it was execution.

Also Read: 30% of Claims Have AI-Altered Media: How Insurers Fight Back

Why is Most Catastrophe Response by Insurance Carriers Still Slow?

Catastrophe response is inherently cross-functional, but the industry still operates it as a series of loosely connected processes. Claims, underwriting, exposure management, and customer operations all interact with the same event but through different systems and decision frameworks.

According to McKinsey & Company, large insurers often operate across dozens of core and satellite systems with limited interoperability. That fragmentation forces data to be reconciled multiple times before action is taken.

Most claims processes in organisations are still built around steady-state assumptions: predictable volumes, sequential processing, and defined escalation paths. Catastrophic events violate all three. They introduce sudden volume spikes and highly uneven severity distributions, requiring immediate prioritization. While some platforms now support automated triage and dynamic risk scoring, these capabilities are often layered onto workflows that remain fundamentally linear.

That mismatch matters. In practice, it means the system can identify which claims are most urgent but still processes them in the order they arrive.

Even when platforms support automated triage and dynamic risk scoring, those tools are often layered on top of workflows that still operate sequentially. The system may know which losses are urgent, but the process still treats everything like standard flow.

How Are Insurers Strengthening CAT Response?

The real need is to reduce the time between insight and execution. That starts with moving away from batch-style operations and toward event-driven workflows. When a storm makes landfall or flood thresholds are triggered, the response should not depend on manual escalation chains. Claims triage, policyholder identification, internal alerts, and vendor activation should begin automatically based on predefined rules.

Shift from Batch Processing to Event-Driven Workflows

Most catastrophe operations are still built around sequential processing models where claims intake, severity review, adjuster assignment, vendor coordination, and customer communication move through separate queues. That structure works during normal operating conditions but breaks down during catastrophic events when insurers must process tens of thousands of claims simultaneously across multiple regions and loss types.

Event-driven workflows fundamentally change how catastrophe operations are executed. Instead of waiting for human escalation, predefined operational triggers initiate response actions automatically as catastrophe conditions evolve. A wildfire perimeter expansion, flood threshold breach, or hurricane landfall can immediately trigger policyholder exposure analysis, claims segmentation, adjuster deployment, vendor mobilization, outbound communication, and reserve escalation in parallel rather than sequentially.

Disaster Risk Management - Create a Unified Decision Layer Across Functions

One of the biggest causes of delay is fragmentation across claims, underwriting, exposure management, and customer operations. The diagnosis is clear: different systems, conflicting data sources, duplicated validation work, and teams prioritizing functional ownership over shared decision control. The harder reality is that there is no quick fix. Creating a single operational view requires reworking workflows, governance, and decision rights across functions and not just adding another dashboard.

Use Automation Where Speed Matters Most

The goal is not full automation everywhere but targeted automation where it creates the most value. The barrier is usually poor confidence in data accuracy, fear of incorrect payouts, audit risk, and resistance to removing human approvals from established workflows. Low-complexity claims, severity scoring, customer outreach triggers, and vendor assignments should not require repeated manual review.

Also Read: Is Parametric Insurance the Answer When Traditional Risk Models Fail as Safe Areas Face Losses?

Turn Pre-Event Planning into Operational Readiness

Scenario planning is often treated as a capital modeling exercise, but it should also prepare operations before an event occurs. The problem is that CAT planning often stays inside actuarial or risk teams and never translates into operational playbooks for claims, vendors, and field response. When the event arrives, the playbook should already be active.

Move Policyholder Communication Earlier

Most insurers still engage customers after damage has already happened. The obstacle is often limited visibility into live exposure, outdated contact workflows, and uncertainty around when proactive outreach should be triggered. Disaster risk management through real-time alerts tied to policyholder exposure should drive earlier communication and mitigation guidance.

Focus on Decision Speed, Not Just Data Quality

Most carriers already have access to strong catastrophe intelligence. The harder problem is turning intelligence into action across approvals, systems, and frontline execution. The competitive advantage is no longer who has the best data—it is who can act on it first.

The Next Step

The industry has spent years improving how quickly it can understand catastrophic risk. The next phase is improving how quickly it can respond to it.`

That requires a shift from data-centric operations to decision-centric operations, from risk intelligence to action intelligence. Because during a catastrophe, speed is not just an operational advantage. It is the strategy.

Topics: Risk Management

.jpg)